README.md

privateGPT

Ask questions to your documents without an internet connection, using the power of LLMs. 100% private, no data leaves your execution environment at any point. You can ingest documents and ask questions without an internet connection!

Built with LangChain and GPT4All and LlamaCpp

Environment Setup

- install all requirements and

- download the weights

- download the injested index

pip install -r requirements.txt

# Then, materialize the 2 models with

git xet checkout models db

Quickstart

Instructions for ingesting your own dataset

Put any and all of your .txt, .pdf, or .csv files into the source_documents directory

Run the following command to ingest all the data.

rm -rf db

python ingest.py

It will create a db folder containing the local vectorstore. Will take time, depending on the size of your documents.

You can ingest as many documents as you want, and all will be accumulated in the local embeddings database.

If you want to start from an empty database, delete the db folder.

Note: during the ingest process no data leaves your local environment. You could ingest without an internet connection.

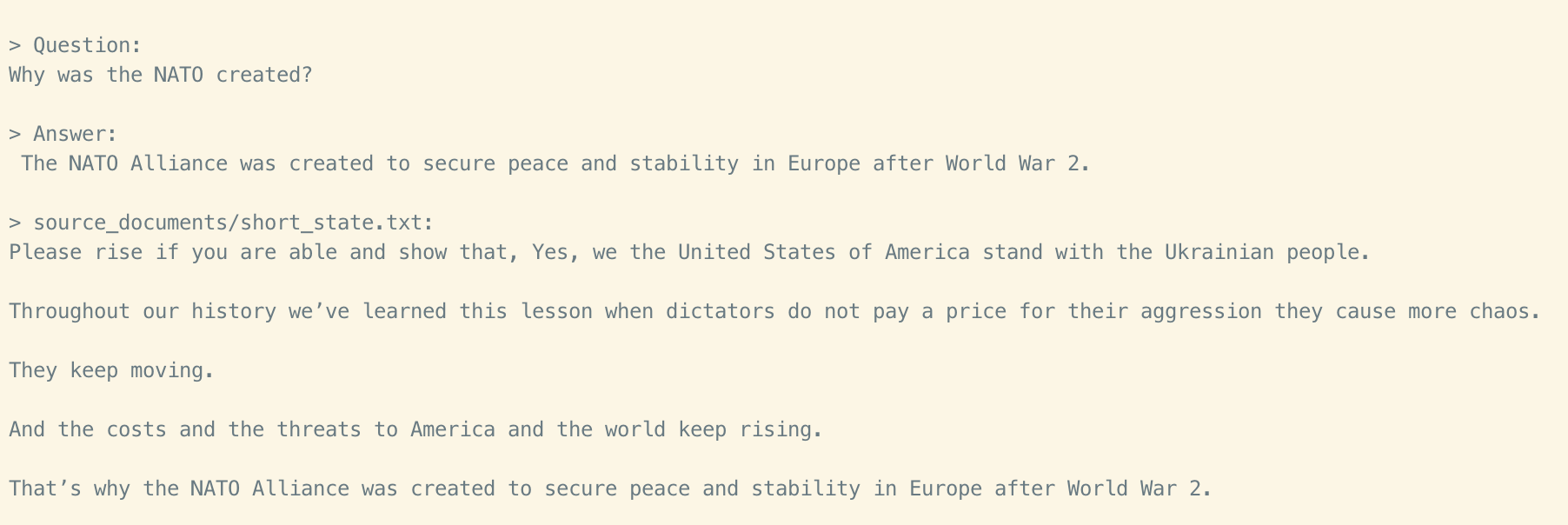

Ask questions to your documents, locally!

In order to ask a question, run a command like:

python privateGPT.py

And wait for the script to require your input.

> Enter a query:

Hit enter. You'll need to wait 20-30 seconds (depending on your machine) while the LLM model consumes the prompt and prepares the answer. Once done, it will print the answer and the 4 sources it used as context from your documents; you can then ask another question without re-running the script, just wait for the prompt again.

Note: you could turn off your internet connection, and the script inference would still work. No data gets out of your local environment.

Type exit to finish the script.

Options

Weights

- LLM: default to ggml-gpt4all-j-v1.3-groovy.bin.

- Embedding: default to ggml-model-q4_0.bin.

In the .env you can edit the variables:

MODEL_TYPE: supports LlamaCpp or GPT4All

PERSIST_DIRECTORY: is the folder you want your vectorstore in (default 'db')

LLAMA_EMBEDDINGS_MODEL: (absolute) Path to your LlamaCpp supported embeddings model - default:ggml-model-q4_0.bin

MODEL_PATH: Path to your GPT4All or LlamaCpp supported LLM - (default ggml-gpt4all-j-v1.3-groovy.bin)

MODEL_N_CTX: Maximum token limit for both embeddings and LLM models - default 1000

Note: because of the way langchain loads the LLAMA embeddings, you need to specify the absolute path of your embeddings model binary. This means it will not work if you use a home directory shortcut (eg. ~/ or $HOME/) soemtimes.

Test dataset

This repo uses a state of the union transcript as an example.

How does it work?

Selecting the right local models and the power of LangChain you can run the entire pipeline locally, without any data leaving your environment, and with reasonable performance.

ingest.pyusesLangChaintools to parse the document and create embeddings locally usingLlamaCppEmbeddings. It then stores the result in a local vector database usingChromavector store.privateGPT.pyuses a local LLM based onGPT4All-JorLlamaCppto understand questions and create answers. The context for the answers is extracted from the local vector store using a similarity search to locate the right piece of context from the docs.GPT4All-Jwrapper was introduced in LangChain 0.0.162.

System Requirements

Python Version

To use this software, you must have Python 3.10 or later installed. Earlier versions of Python will not compile.

C++ Compiler

If you encounter an error while building a wheel during the pip install process, you may need to install a C++ compiler on your computer.

For Windows 10/11

To install a C++ compiler on Windows 10/11, follow these steps:

- Install Visual Studio 2022.

- Make sure the following components are selected:

- Universal Windows Platform development

- C++ CMake tools for Windows

- Download the MinGW installer from the MinGW website.

- Run the installer and select the "gcc" component.

Disclaimer

This is a test project to validate the feasibility of a fully private solution for question answering using LLMs and Vector embeddings. It is not production ready, and it is not meant to be used in production. The models selection is not optimized for performance, but for privacy; but it is possible to use different models and vectorstores to improve performance.

| File List | Total items: 12 | ||

|---|---|---|---|

| Name | Last Commit | Size | Last Modified |

| db | |||

| models | |||

| source_documents | |||

| .env | |||

| .gitattributes | |||

| .gitignore | |||

| LICENSE | |||

| README.md | |||

| constants.py | |||

| ingest.py | |||

| privateGPT.py | |||

| requirements.txt | |||